The rise of social media is one of the big stories of the twenty-first century. Facebook may only be 21 years old, but, according to a Pew Research study of 2024, more than two-thirds of Americans now use it. YouTube has an even larger footprint, with 83% of American adults using the platform to consume media.

The vast majority of such services and platforms charge their users no direct fees. Consumers, in effect, get to use the social media service in return for allowing the platform access to their data, which can, amongst other things, allow paid-for adverts to be targeted directly at them. Although given that Facebook currently seems somewhat obsessed with attempting to tempt your columnist into styling his hair with sea salt or purchasing shoes which will add three inches to his height, the accuracy of this targeting is, perhaps, not always what the companies involved might hope for.

Nonetheless, this is big business. Indeed, over 80% of the total American advertising spent targeting consumers is now digital. But as the size and economic footprint of social media firms have grown, so too have concerns over their handling and use of data.

The potential harms come in various guises. The potential for data breaches and private information becoming public is an obvious one, but consumers may well also intrinsically value their own privacy and be, at times, unaware of how much of their data they have effectively signed over to third-party firms.

Alongside the privacy concerns, there is also a rising tide of worry about the impacts of social media on mental health, child safety, and sometimes even on social cohesion more broadly.

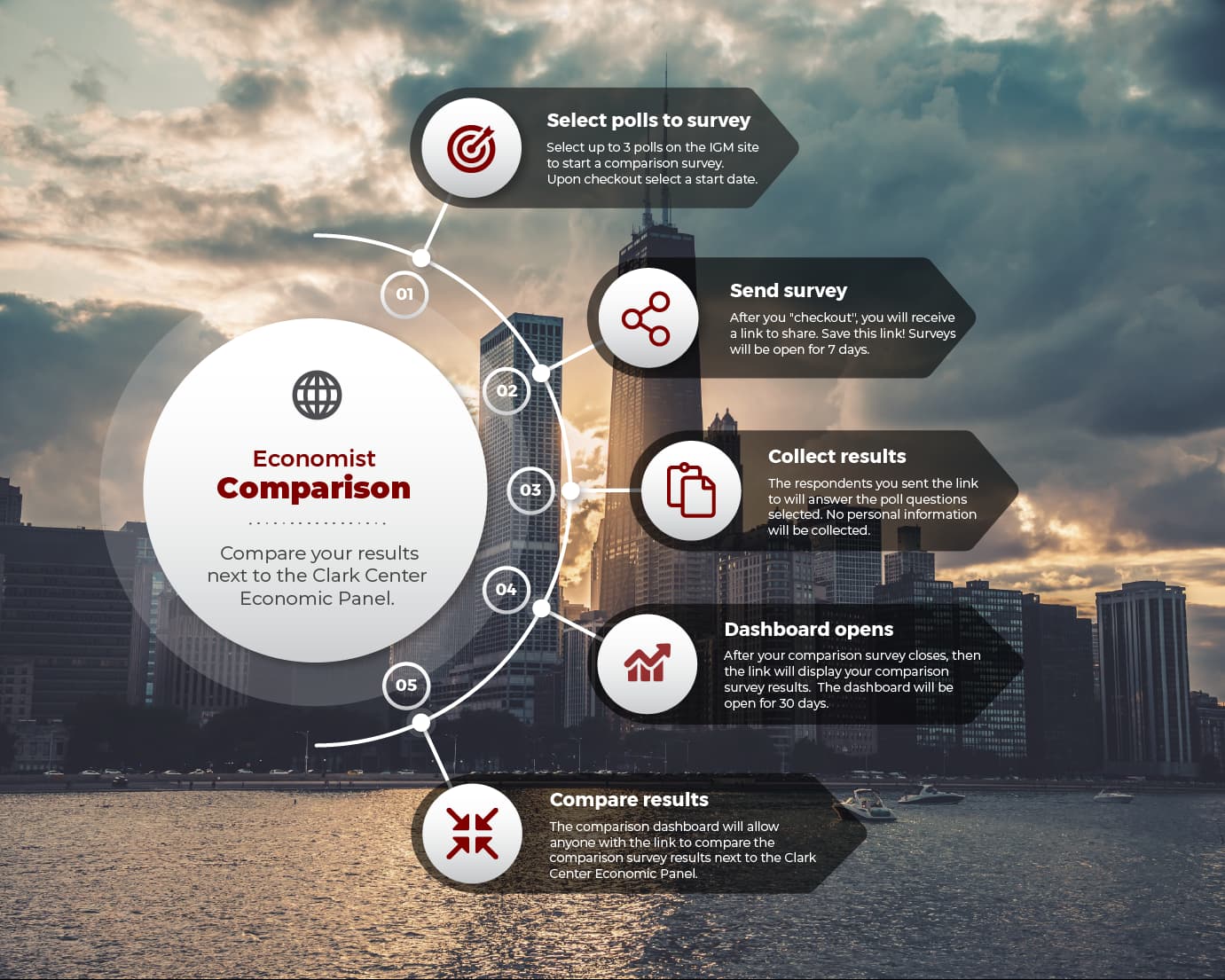

This week, the Clark Center’s US Experts Panel looked at the question of regulation.

They were first asked whether ‘the potential for consumers to be harmed by digital platforms’ use of their personal data is sufficient that they would benefit from laws assigning them some kind of default control rights over their data?’

Overall, the panellists were supportive of calls for more regulation. Weighted by confidence, 34% of respondents strongly agreed and 46% agreed. That said, several of the respondents noted that the details of such a law would matter. Regulating data privacy may well be easier in theory than in practice.

Some respondents who supported greater regulation warned about the need to avoid a measure such as the European Union’s General Data Protection Regulation (GDPR), which is often seen as clunky and adding to costs.

GDPR, which has been in force in the EU since 2018, seeks to enhance the rights of consumers of digital services and provide protections over their privacy. Firms face large fines for breaches.

The tech industry argues that GDPR has held back innovation in Europe and made digital services more costly. A review of the economic literature, published in 2022, noted that:

The economic literature on the GDPR to date has largely—though not universally—documented harms to firms. These harms include firm performance, innovation, competition, the web, and marketing. On the elusive consumer welfare side, the literature documents some objective privacy improvements as well as helpful survey evidence. The literature also examines the consequences of the GDPR’s design decisions and illuminates

The paper also noted the difficulties inherent in quantifying the impact of a measure such as GDPR, given ‘the difficulty of finding a suitable control group, variable firm compliance and regulatory enforcement as well as the regulation’s impact on data observability’.

It does seem likely that consumers have made some gains from the new protections, but these are hard to measure, whilst the costs to firms are more obvious. Interestingly, researchers in another study found some evidence that GDPR may have given a further advantage to larger firms over their smaller peers:

Regarding firms, we find two effects that partially offset each other. On the one hand, we find that advertising prices increase, which can be explained by the increased trackability of consumers. However, our analysis also shows that smaller advertisers such as ours, who are dependent on third party access, are able to collect less data and conduct less business due to consumer opt-out. This puts them at a disadvantage relative to large companies who rely on their first-party ecosystem to collect data. Thus, our article has broader implications beyond the online travel industry and keyword-based advertising markets. Firms in this industry, as others in the digital economy, increasingly compete with the large technology firms such as Google whose reach spans across many different online markets and for whom consumers have little choice but to accept data processing. Thus, although our results highlight that increased consent requirements may not be wholly negative, if consumers are similarly using such opt-out capabilities at our estimated rates in other markets (such as behaviorally-targeted advertising markets), then such regulation may put smaller firms at a disadvantage relative to the internet giants.

The panel was also asked, stepping beyond the privacy question, whether ‘the risks of harm from use of social media services – such as harm to mental health, exploitation of children, and more – are now high enough that society would benefit from federal regulations setting safety standards and creating a process of compensation for harm by digital platforms?’

Once again, there was broad agreement. Weighted once more by confidence, 20% of respondents strongly agreed and 53% agreed. David Autor, of MIT, pointed to a study showing, for example, that Instagram harms the mental health of young women.

In general, the panel is strongly in favour of a tougher regulatory approach to social media and online privacy. But, as is so often the case, the devil is in the details when it comes to actually legislating.